Monday, June 29, 2015

Examining performance for MongoDB and the insert benchmark

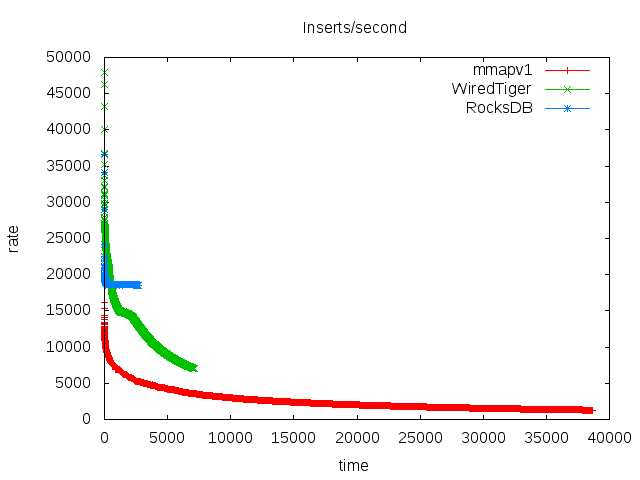

My previous post has results for the insert benchmark when the database fits in RAM. In this post I look at MongoDB performance as the database gets larger than RAM. I ran these tests while preparing for a talk and going on vacation, so I am vague on some of the configuration details. The summary is that RocksDB does much better than mmapv1 and the WiredTiger B-Tree when the database is larger than RAM because it is more IO efficient. RocksDB doesn't read index pages during non-unique secondary index maintenance. It also does fewer but larger writes rather than the many smaller/random writes required by a B-Tree. This is more of a benefit for servers that use disk.

Subscribe to:

Post Comments (Atom)

MySQL 9.7.0 vs sysbench on a small server

This has results from sysbench on a small server with MySQL 9.7.0 and 8.4.8. Sysbench is run with low concurrency (1 thread) and a cached da...

-

I previously used math to explain the number of levels that minimizes write amplification for an LSM tree with leveled compaction. My answe...

-

This has results to measure the impact of calling fsync (or fdatasync) per-write for files opened with O_DIRECT. My goal is to document the ...

-

I need stable performance from the servers I use for benchmarks. I also need servers that don't run too hot because too-hot servers caus...

Great post.

ReplyDeleteHi, Mark I have read most of your posts in Small Datum and learn a lot.

I test MongoDB 3.4 with RocksDB and WiredTiger engine using sysbench with single thread, and found WiredTiger is 20% faster then RocksDB, and the result is reproducible.

I am sure that WT is faster than MongoRocks for some workloads. But I have a few suggestions:

Delete1) I take a lot of time to explain the workloads that I use. You have provide almost no information about the workload that you used.

2) There are many dimensions by which something can be better. These include database size, write efficiency and response time. When you write that WT is faster I assume you are describing response time, but you make no mention of space and write efficiency. Space efficiency determines how much SSD you need to buy to store your database. Write efficiency determines how long that SSD will last. While WT frequently wins on response time, MongoRocks usually wins on space and write efficiency.

Regardless, I like both WT and MongoRocks.