I ran the Insert Benchmark for Postgres versions 12.11, 13.7, 14.3 and 15b1. Reports are provided for cached and IO-bound workloads. The benchmark is run on Intel NUC servers at low concurrency. The goal is to determine how performance changes over time. A description of the Insert Benchmark is here.

tl;dr

- I am not a Postgres expert

- regressions are small in most cases

- the l.i0 benchmark step has regressions that are worth investigating in versions 14 and 15b1

- these regressions might have been fixed, see the perf report for the patch (15b1p1)

Updates:

- regressions have been fixed, scroll to the end

- added links to the configuration files

- part 2 with throughput vs time graphs is here

- provided data on the percentage of time in parse, analyze, optimize, execute

- added command lines

- added the index + relation sizes after each test step

- added links to performance reports when prepared statements were used for queries

- added links to performance reports with a patch to improve version 15b1

- added a second set of results for 15b1p1 (15b1 with the patch) for cached and IO-bound. Results are similar to the first set.

- livestreaming my debugging efforts, see Debugging the regression below

- added data from pg_stat_wal to the final section

- added results for 15b2 for cached and IO-bound

The regression

After I shared this blog post a possible cause for the regression was discussed

here and the community quickly provided

a patch for me to test. The results are excellent as 15b1p1 (15b1 with the patch) almost matches the results for version 13.7 on the cached workload. For the IO-bound workload the patch fixes the regression for all but one of the benchmark steps (l.i1).

The improvement is obvious in the cached workload

Summary. The improvement is also there for the IO-bound workload, but less obvious. In the IO-bound workload

Summary the throughput for l.i0 (load without secondary indexes) and q100.1 are better than results for version 14.3 and almost match version 13.7. While the throughput for l.i1 (inserts with secondary index maintenance) did not improve. From the

IO-bound metrics for l.i1 I see that CPU (cpupq == CPU per statement) has been reducing with each major release, but with the patch (15b1p1) the value increased to match the value for version 13.7. I don't see this effect in the

l.i1 metrics with the cached workload.

Summer has arrived and thermal throttling might be an issue for my SSDs which would be an unfortunate source of variance. The next step is to repeat the IO-bound workload at night when it is cooler and then look at CPU profiles for l.i1.

A guide to the performance reports

is here. For explaining performance the most interesting metric is cpupq (CPU/query or CPU/insert depending on the benchmark step). From the guide, cpupq

includes CPU by the client, DBMS and anything else running on the server. The benchmark client, written in Python, uses a lot of CPU. That can make it harder to see how CPU changes from the DBMS. With MySQL, I can also measure the CPU time used by mysqld. With Postgres I am still figuring out how to provide a DBMS-only measurement of the CPU overheads.

Test setup

Tests are run on Intel NUC servers I have at home. The OS is Ubuntu 20.04, hyperthreading and turbo-boost are disabled. All binaries are compiled using the same gcc/glibc toolchain and all tests used the same server.

The benchmark is repeated for a cached and IO-bound workload. The database fits in memory for the cached workload and is larger than memory for the IO-bound workload. The Postgres configuration files are here for 12.11, 13.7, 14.3 and 15b1.

The Insert Benchmark description has more details. In summary the benchmark has several steps and I focus on the ones where performance changes:

- l.i0 - load in PK order, the table that has no secondary indexes

- l.x - create three secondary indexes

- l.i1 - insert in PK order, the table that has 3 secondary indexes

- q100.1 - measure range query QPS from one client while another does 100 inserts/s

- q500.1 - measure range query QPS from one client while another does 500 inserts/s

- q1000.1 - measure range query QPS from one client while another does 1000 inserts/s

The l.i0 step inserts 20m (500m) rows for the cached (IO-bound) workload. The l.i1 step inserts 20m (10m) rows for the cached (IO-bound) workload. The q100.1, q500.1 and q1000.1 steps each run for 2 hours.

# old school, no prepared statementspython3 iibench.py --db_user=foo --db_host=127.0.0.1 \

--db_password=bar --dbms=postgres --db_name=ib --setup \

--query_threads=1 --max_rows=1000000 --secs_per_report=1

# try out the new support for prepared statements

python3 iibench.py --db_user=foo --db_host=127.0.0.1 \

--db_password=bar --dbms=postgres --db_name=ib --setup \

--query_threads=1 --max_rows=1000000 --secs_per_report=1 \

--use_prepared_insert --use_prepared_query

Cached workload

The reports are here for ibench without and with prepared statements used for queries. Next is a report for prepared statements and a patch for 15b1 (I call it 15b1p1) that fixes a CPU regression. Postgres query throughput improves by ~1.5X when prepared statements are used for queries. Prepared statements are not yet used for inserts because I need to debug CPU perf issues on the ibench client.

I use relative throughput to document performance changes. It is: my_throughput / base_throughput where base_throughput is the result from Postgres 13.7. The interesting changes are below. The results aren't surprising. In most cases the regressions are small because Postgres is good at avoiding them. The regressions for 15b1 are larger because it is a beta and a newer release.

Throughput relative to 13.7

version l.i0 l.i1 q100.1

14.3 0.934 0.971 0.971

15b1 0.856 0.946 0.953

The percentage of samples from pg_plan_queries, pg_parse_query, pg_analyze_and_rewrite and query execution from v14.3. Prepared statements were not used.

step plan parse analyze execute

l.i0 20 11 25 44

l.i1 8 5 10 77

q100.1 33 6 10 51

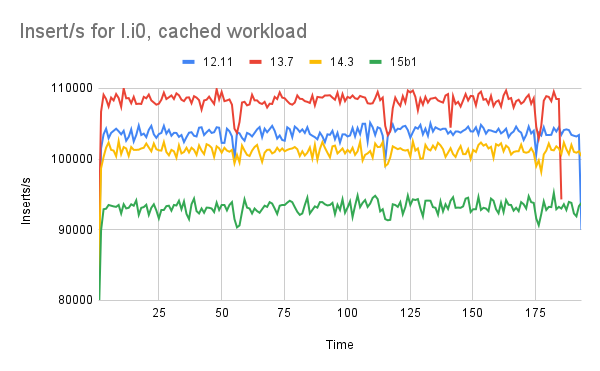

Cached - l.i0

The largest regressions are from the l.i0 step. While this test doesn't run for a long time, the results are similar for the IO-bound workload. Flamegraphs are here for 12.11, 13.7, 14.3 and 15b1. Differential flamegraphs are here for 13.7 vs 14.3 and 14.3 vs 15b1. For 15b1 I renamed ExecInitExprInternal to ExecInitExpr to match the names used in older versions.

I also have a benchmark report here for Postgres versions 14.0, 14.1, 14.2 and 14.3. There is little change across them so any regressions from 13.7 to 14.3 are likely in 13.7 to 14.0.

Before looking at the differential flamegraphs I compared the output by hand. For functions called by PostgresMain not much changes between versions in pg_plan_queries and pg_parse_queries. There is a small improvement for pg_analyze_and_rewrite and a small regression for PortalRun. The rest of the drill down is here with a focus on functions called under PortalRun. There are regressions in a few functions when ExecScan is on the call stack.

Percentage of samples for children of PostgresMain

12.11 13.7 14.3 15b1 function

----- ----- ----- ----- --------

21.50 20.27 20.44 19.84 pg_plan_queries

11.56 12.19 11.34 10.34 pg_parse_queries

25.76 25.70 24.92 22.64 pg_analyze_and_rewrite

33.02 33.06 34.94 37.31 PortalRun

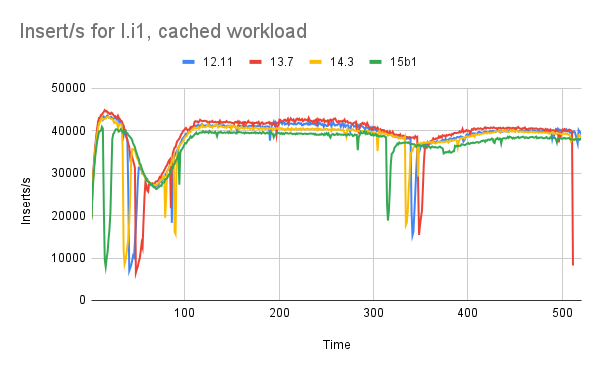

Cached - l.i1

Flamegraphs are here for 12.11, 13.7, 14.3 and 15b1. Differential flamegraphs are here for 13.7 vs 14.3 and 14.3 vs 15b1. I didn't do the summary by hand because the differences are small.

Cached - q100.1

Flamegraphs are here for 12.11, 13.7, 14.3 and 15b1. Differential flamegraphs are here for 13.7 vs 14.3 and 14.3 vs 15b1. I didn't do the summary by hand because the differences are small. ReadyForQuery is much less prominent in 15b1 relative to earlier versions. It isn't clear that the differential graph for 14.3 vs 15b1 is useful. Perhaps too much code has changed.

Table and index sizes

These are the sizes from pg_index_sizes, pg_relation_size and pg_total_relation_size. I suspect that the improvements (size reduction) in recent Postgres versions don't reproduce here because the workload is insert only.

Legend

* all sizes are in MB unless indicated with G (for GB)

* index is value from pg_index_sizes

* rel is value from pg_relation_size

* total is value from pg_total_relation_size

l.i0 - after load in PK order without secondary indexes

version index rel total

12.11 428 1531 1959

13.7 428 1531 1959

14.3 428 1531 1959

15b1 428 1531 1959

l.x - after create 3 secondary indexes

version index rel total

12.11 2243 1538 3782

13.7 2243 1538 3782

14.3 2243 1538 3782

15b1 2243 1538 3782

l.i1 - after load more data with 3 secondary indexes to maintain

version index rel total

12.11 5299 3069 8368

13.7 5308 3069 8378

14.3 5308 3069 8378

15b1 5308 3069 8378

q3.1000 - at the end of the read+write steps

version index rel total

12.11 8338 3951 12G

13.7 8333 3950 12G

14.3 8333 3950 12G

15b1 8333 3950 12G

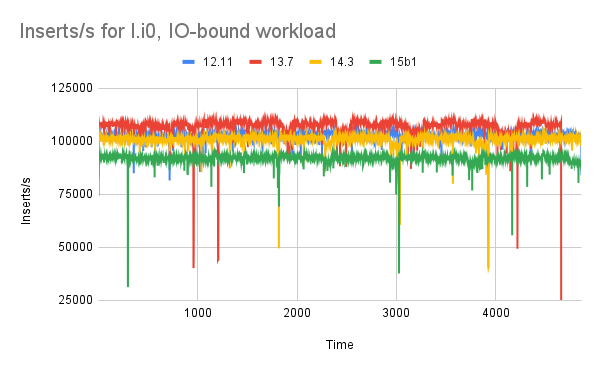

IO-bound workload

The reports are here for ibench without and with prepared statements used for queries. Next is a report for prepared statements and a patch for 15b1 (I call it 15b1p1) that fixes a CPU regression.

Postgres query throughput improves by ~1.5X when prepared statements are used for queries. Prepared statements are not yet used for inserts because I need to debug CPU perf issues on the ibench client. I still need to figure out why more IO isn't done per query for this workload given that it is IO bound and index indexes are much larger than memory.

I don't think the IO-bound report needs additional analysis as the regression for l.i0 is analyzed above. I also have a benchmark report here for Postgres versions 14.0, 14.1, 14.2 and 14.3. There is little change across them so any regressions from 13.7 to 14.3 are likely in 13.7 to 14.0.

Table and index sizes

These are the sizes from pg_index_sizes, pg_relation_size and pg_total_relation_size. I suspect that the improvements (size reduction) in recent Postgres versions don't reproduce here because the workload is insert only.

Legend

* all sizes are in GB

* index is value from pg_index_sizes

* rel is value from pg_relation_size

* total is value from pg_total_relation_size

l.i0 - after load in PK order without secondary indexes

version index rel total

12.11 10 37 48

13.7 10 37 48

14.3 10 37 48

15b1 10 37 48

l.x - after create 3 secondary indexes

version index rel total

12.11 55 37 92

13.7 55 37 92

14.3 55 37 92

15b1 55 37 92

l.i1 - after load more data with 3 secondary indexes to maintain

version index rel total

12.11 55 38 94

13.7 55 38 94

14.3 55 38 94

15b1 55 38 94

q3.1000 - at the end of the read+write steps

version index rel total

12.11 57 39 96

13.7 57 39 96

14.3 57 39 96

15b1 57 39 96

Debugging the regression

This section has details from trying to debug the regression while I collaborate with a few experts from the Postgres community. For the regression in the l.i1 benchmark step (load in PK order with 3 secondary indexes) on the IO-bound workload there is:

- ~7% increase in KB written per insert(wkbpi)

- ~7% increase in KB read per insert (rkbpq)

- ~12% increase in CPU per insert (cpupq), but note that CPU here includes everything on the host, including the benchmark client

From pg_stat_bgwriter there are differences between 15b1 and 15b1p1 ...

select * from pg_stat_bgwriter is here for pg15b1p1:

checkpoints_timed | 32

checkpoints_req | 6

checkpoint_write_time | 7734090

checkpoint_sync_time | 6471

buffers_checkpoint | 12444695

buffers_clean | 13580345

maxwritten_clean | 0

buffers_backend | 7593943

buffers_backend_fsync | 0

buffers_alloc | 19970817

stats_reset | 2022-06-23 21:45:38.834884-07

And then for pg15b1:

checkpoints_timed | 37

checkpoints_req | 2

checkpoint_write_time | 8283432

checkpoint_sync_time | 6109

buffers_checkpoint | 12753126

buffers_clean | 13702867

maxwritten_clean | 0

buffers_backend | 6500038

buffers_backend_fsync | 0

buffers_alloc | 20002787

stats_reset | 2022-06-20 16:55:15.35976-07

And finally the graph of throughput vs time for l.i1 shows some kind of stall for 15b1p1 that doesn't occur for 15b1, and this stall is repeatable. It occurs in both runs of the benchmark I did for 15b1p1.

First is the graph for 15b1 which don't have the stall. The x-axis is time, the y-axis is inserts/s and the results are reported by the benchmark client at 1-second intervals.

Next is the graph for 15b1p1 which has a stall.

From a few more tests, this error message doesn't occur with 15b1 but does occur with 15b1p1 and variations of 15b1p1 that exclude some of the patches:

2022-06-27 ... PDT [1004196] ERROR: canceling autovacuum task

The end

The regression is fixed and 15b1 with the patch now matches the performance from 13.7, so this fixed all of the regressions that arrived in version 14.

The fix for the regression comes in two parts. First, there is the patch that reduces CPU overhead. Second, I increased max_wal_size from 20G to 40G and that resolves some noise that shows up on the l.i1 (inserts with secondary index maintenenace) step. I started with the cx5 config that has max_wal_size set to 20G and then switched to the cx7 config that sets it to 40G.

The performance reports are here for the cached and IO-bound workloads.

The data below is from pg_stat_wal at the end of each benchmark step for versions 14.3, 15b1 and 15b1p1. All had wal_buffers = 16M. I don't know why wal_buffers_full and wal_write increased from 14.3 to 15b1, nor do I know whether that is an issue but this might explain whey I needed to increase max_wal_size from 20G to 40G. Most of that difference occurs during the l.x step (create secondary indexes).

from pg_stat_wal at end of l.i0

14.3 15b1 15b1p1

wal_records 1.0B 1.0B 1.0B

wal_fpi 3.0M 3.0M 2.6M

wal_bytes 109.2B 109.5B 106.4B

wal_buffers_full 0 0 0

wal_write 4.5M 4.6M 4.5M

wal_sync 103K 103K 99K

from pg_stat_wal at end of l.ix

14.3 15b1 15b1p1

wal_records 1.0B 1.0B 1.0B

wal_fpi 8.8M 9.3M 9.1M

wal_bytes 153.0B 157.1B 155.3B

wal_buffers_full 2.3M 3.5M 3.6M

wal_write 6.9M 8.2M 8.1M

wal_sync 107K 106K 102K

from pg_stat_wal at end of l.i1

14.3 15b1 15b1p1

wal_records 1.1B 1.1B 1.1B

wal_fpi 26.3M 26.8M 26.5M

wal_bytes 285.5B 289.7B 287.4B

wal_buffers_full 2.3M 3.5M 3.6M

wal_write 7.1M 8.4M 8.3M

wal_sync 207K 207K 202K

CREATE INDEX in 15b2

Create index is ~3% slower for the IO-bound workload. See the summary and the metrics. I can reproduce this. The config file for these tests has max_parallel_workers = 0 so I assume that parallel index create isn't done.

From the metrics, this doesn't look like a CPU regression although I had to consult the vmstat output to confirm because the metrics page just shows cpupq = 4 for both. The average CPU utilization (us) is lower for 15b2 but the CPU/work is similar because the value of us * Nsamp is similar for 15b1 and 15b2 (Nsamp is the number of vmstat lines processed). Samples were take every second.

Averages from vmstatcat 500m.pg15b1.cx7/l.x/o.vm.dop1 \

| grep -v procs | grep -v swpd \

| awk '{ c+=1; s12+=$12; s13+=$13; s14+=$14; s15+=$15; s16+=$16 } END { printf "%s\t%.1f\t%.1f\t%.1f\t%.1f\t%.1f\n", c, s12/c, s13/c, s14/c, s15/c, s16/c }'

version Nsamp cs us sy id wa

15b1 415 769.7 21.3 3.0 73.1 2.6

15b2 426 752.2 20.8 3.0 73.0 3.2

From the metrics this also doesn't look like an IO regression because wkbpi and rkbpq haven't changed. For this benchmark step wkbpi is KB-written / Num-rows and rkbpq is KB-read / Num-rows where KB-written and KB-read are measured by iostat and Num-rows is the number of rows in the indexed table.

But from iostat output the values for r_await and w_await are larger for 15b2. HW (SSD thermal throttling in this case) is one cause, but programmers always love to start by blaming the HW. I will repeat this at night and let 15b2 run first.cat 500m.pg15b1.cx7/l.x/o.io.dop1 \

| grep nvme \

| awk '{ c+=1; s2+=$2; s3+=$3; s4+=$4; s5+=$5; s6+=$6; s7+=$7; s8+=$8; s9+=$9; s10+=$10; s11+=$11; s12+=$12; s13+=$13 } END { printf "%s\t%.1f\t%.1f\t%.3f\t%.3f\t%.3f\t%.1f\t%.1f\t%.1f\t%.3f\t%.3f\t%.3f\t%.1f\n", c, s2/c, s3/c, s4/c, s5/c, s6/c, s7/c, s8/c, s9/c, s10/c, s11/c, s12/c, s13/c }'

version Nsamp r/s rkB/s rrqm/s %rrqm r_await rareq-sz w/s wkB/s wrqm/s %wrqm w_await wareq-sz

15b1 414 666.0 84702.1 0.000 0.000 0.195 113.5 228.6 91061.3 3.820 2.048 6.010 331.7

15b2 425 648.2 82450.5 0.000 0.000 0.224 114.4 218.1 88508.5 3.428 2.017 7.271 342.5