In this post I share results for IO-bound sysbench on a fast server using MyRocks, InnoDB and TokuDB.

tl;dr

- MyRocks is more space efficient than InnoDB. InnoDB uses ~2.2X more space than compressed MyRocks and ~1.1X more space than uncompressed MyRocks.

- MyRocks is more write effcient than InnoDB. InnoDB writes ~7.9X more to storage per update than MyRocks on the update-index test.

- For full index scans InnoDB 5.6 is ~2X faster than MyRocks. But with readahead enabled, uncompressed MyRocks is ~2X faster than InnoDB 5.6 and comparable to InnoDB 5.7/8.0.

- MyRocks is >= InnoDB 5.7/8.0 for 3 of the four update-only tests. update-one is the only test on which it isn't similar or better and that test has a cached working set.

- MyRocks is similar to InnoDB 5.6 on the insert only test.

- MyRocks matches InnoDB 5.7/8.0 for read-write with range-size=100 (the default). It does worse with range-size=10000.

- MyRocks is similar to InnoDB 5.6 for read-only with range-size=100 (the default). It does worse with range-size=10000. InnoDB 5.7/8.0 do better than InnoDB 5.6.

- Results for point-query are mixed. MyRocks does worse than InnoDB 5.6 while InnoDB 5.7/8.0 do better and worse than InnoDB 5.6.

- Results for random-points are also mixed and similar to point-query.

Configuration

My usage of sysbench is

described here. The test server has 48 HW threads, fast SSD and 50gb of RAM. The database block cache (buffer pool) was 10gb for MyRocks and TokuDB and 35gb for InnoDB. MyRocks and TokuDB used buffered IO while InnoDB used O_DIRECT. Sysbench was run with 8 tables and 100M rows/table. Tests were repeated for 1, 2, 4, 8, 16, 24, 32, 40, 48 and 64 concurrent clients. At each concurrency level the read-only tests run for 180 seconds, the write-heavy tests for 300 seconds and the insert test for 180 seconds.

Tests were run for MyRocks, InnoDB from upstream MySQL, InnoDB from

FB MySQL and TokuDB. The binlog was enabled but sync on commit was disabled for the binlog and database log. All engines used jemalloc. Mostly accurate my.cnf files

are here.

- MyRocks was compiled on October 16 with git hash 1d0132. Tests were repeated with and without compression. The configuration without compression is called MySQL.none in the rest of this post. The configuration with compression is called MySQL.zstd and used zstandard for the max level, no compression for L0/L1/L2 and lz4 for the other levels.

- Upstream 5.6.35, 5.7.17, 8.0.1, 8.0.2 and 8.0.3 were used with InnoDB. SSL was disabled and 8.x used the same charset/collation as previous releases.

- InnoDB from FB MySQL 5.6.35 was compiled on June 16 with git hash 52e058.

- TokuDB was from Percona Server 5.7.17. Tests were done without compression and then with zlib compression.

The performance schema was enabled for upstream InnoDB and TokuDB. It was disabled at compile time for MyRocks and InnoDB from FB MySQL because FB MySQL 5.6 has user & table statistics for monitoring.

Results

All of the data for the tests is

on github. Graphs for each test are below. The graphs show the QPS for a test relative to the QPS for InnoDB 5.6.35 and a value > 1 means the engine gets more QPS than InnoDB 5.6.35. The graphs have data for tests with 1, 8 and 48 concurrent clients and I refer to these as low, mid and high concurrency. The tests are

explained here and the results are in the order in which the tests are run except where noted below. The graphs exclude results for InnoDB from FB MySQL to improve readability.

space and write efficiency

MyRocks is more space and write efficient than InnoDB.

- InnoDB uses 2.27X more space than compressed MyRocks and 1.12X more space than uncompressed MyRocks.

- InnoDB writes ~7.9X more to storage per update than MyRocks on the update index test.

scan

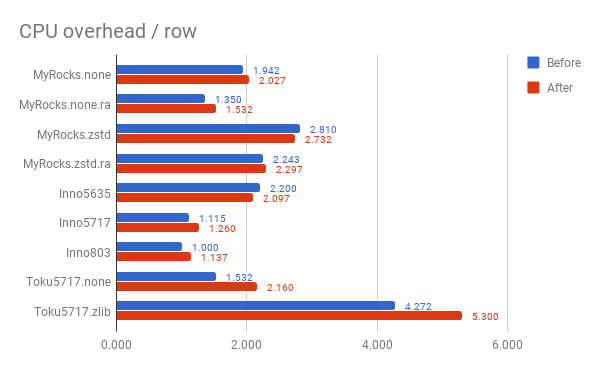

This has data on the time to do a full scan of the PK index before and after the write-heavy tests:

- InnoDB 5.6 is ~2X faster than MyRocks without readahead. I don't think the InnoDB PK suffers from fragmentation with sysbench. Had that been a problem then the gap would have been smaller.

- MyRocks with readahead is almost 2X faster than InnoDB 5.6.

- InnoDB 5.7/8.0 is faster than InnoDB 5.6

- For MyRocks and InnoDB 5.6 the scans before and after write-heavy tests have similar performance but for InnoDB 5.7/8.0 the scan after the write-heavy tests was faster. The scan was faster after because the storage read rate was much better as show in the second graph. But the CPU overhead/row was larger for the scan after write-heavy tests. This is a mystery.

update-inlist

The workload updates 100 rows per statement via an in-list and doesn't need index maintenance. Interesting results:

- MyRocks is similar to InnoDB at low concurrency

- MyRocks is the best at mid and high concurrency. It benefits from read-free secondary index maintenance.

- InnoDB 5.7 & 8.0 are better than InnoDB 5.6 at mid and high concurrency.

update-one

While the configuration is IO-bound, the test updates only one row so the working set is cached. Interesting results:

- MyRocks suffers at high concurrency. I did not debug this.

- InnoDB 5.7/8.0 are worse than InnoDB 5.6 at low concurrency and better at high. New non-InnoDB code overhead hurts at low but InnoDB code improvements help at high.

update-index

The workload here needs secondary index maintenance. Interesting results:

- MyRocks is better here than on other write-heavy tests relative to InnoDB because non-unique secondary index maintenance is read-free.

- InnoDB 5.7/8.0 do much better than InnoDB 5.6 at mid and high concurrency

- Relative to InnoDB 5.6, the other engines do better at mid than at high concurrency. I did not debug this.

update-nonindex

The workload here doesn't require secondary index maintenance. Interesting results:

- MyRocks is similar to InnoDB 5.7/8.0. All are better than InnoDB 5.6.

read-write range=100

Interesting results:

- MyRocks is similar to InnoDB 5.7/8.0. Both are slightly better than InnoDB 5.6 at mid concurrency and much better at high. I didn't debug the difference at high concurrency. Possible problems for InnoDB 5.6 include: write-back stalls, mutex contention.

read-write range=10000

Interesting results:

- MyRocks is better than InnoDB 5.6

- InnoDB 5.7/8.0 are better than MyRocks. Long range scans are more efficient for InnoDB starting in 5.7 and this test scans 10,000 rows per statement versus 100 rows in the previous section.

read-only range=100

This test scans 100 rows/query. Interesting results:

- MyRocks is similar or better than InnoDB 5.6 except at high concurrency. For the read-write tests above MyRocks benefits from faster writes, but this test is read-only.

- InnoDB 5.7/8.0 are similar or better than InnoDB 5.6. Range scan improvements offset the cost of new code.

read-only.pre range=10000

This test scans 10,000 rows/query and is run before the write heavy tests. Interesting results:

- MyRocks is similar to slightly worse than InnoDB 5.6

- InnoDB 5.7/8.0 are better than InnoDB 5.6

read-only range=10000

This test scans 10,000 rows/query and is run after the write heavy tests. Interesting results:

- MyRocks is similar to slightly worse than InnoDB 5.6

- InnoDB 5.7/8.0 are better than InnoDB 5.6 at mid and high concurrency

- The differences with InnoDB 5.6 here are smaller than in the previous test above that is run before the write-heavy tests.

point-query.pre

This test is run before the write heavy tests. Interesting results:

- MyRocks is slightly worse than InnoDB 5.6

- InnoDB 5.7/8.0 are slightly better than InnoDB 5.6

point-query

This test is run after the write heavy tests. Interesting results:

- MyRocks and InnoDB 5.7/8.0 are slightly worse than InnoDB 5.6

random-points.pre

This test is run before the write heavy tests. Interesting results:

- MyRocks and InnoDB 5.7/8.0 do better than InnoDB 5.6 at low concurrency but their advantage decreases at mid and high concurrency.

random-points

This test is run after the write heavy tests. Interesting results:

- MyRocks and InnoDB 5.7 are slightly worse than InnoDB 5.6

- InnoDB 8.0 is slightly better than InnoDB 5.6

hot-points

The working set for this test is cached and the results are similar to the

in-memory benchmark.

insert

Interesting results:

- MyRocks is worse than InnoDB 5.6 at low/mid concurrency and slightly better at high.

- InnoDB 5.7/8.0 are similar or worse than InnoDB 5.6 at low/mid concurrency and better at high concurrency.